It’s a perennial problem: there’s so much testing to be done and not enough time in which to do it. I’ve already written one post about this issue: What to Test When There’s Not Enough Time to Test, which talks about how to prioritize your testing and how to work with your team to avoid getting into situations where there’s not enough testing time. But this week I’d like to take a more general view of time management: how can we structure our days so we don’t feel continually stressed by the many projects we work on? Here are eight time management strategies that work for me:

Strategy One: Know Your Priorities

I have a bi-weekly one-on-one meeting with my manager, and in each meeting he asks me “What’s the most important priority for you right now?” I love this question, because it helps me focus on what’s most important. You may have ten different things on your to-do list, but if you don’t decide which things are the most important, you will always feel like you should be working on something else, which keeps you from focusing on the task at hand. I like to think about my first, second, and third priorities when I am planning what to work on next.

How do you decide what’s most important? One good way is to think about impacts and deadlines. If there is a release to Production that is going out tonight and it requires some manual testing, preparing for that release is going to be my top priority, because customers will be impacted by the quality of the release. If I am presenting a workshop to other testers in my company, and that presentation is tomorrow, I’m going to want to make my preparation a priority. If you evaluate each of your tasks in terms of what its impact is and what its due date is, it will become clearer how your priorities should be ordered.

Strategy Two: Keep a To-Do List

Keeping a to-do list means that you won’t forget about any of your tasks. This does not mean that all of your tasks will get done. When I finally realized that my to-do list would never be completed, I was able to stop worrying about how many items were on it. I have found that the less I worry about how many things are in my to-do list, the more items I can accomplish.

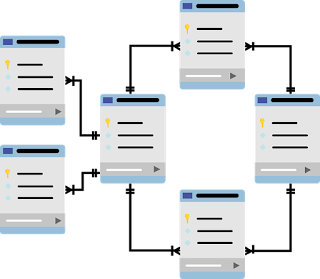

I use a Trello board for my to-do list, and this is what I use for columns: Today, This Week, Next Week, Soon, and Someday. Whenever I have a new task, I add it to one of these columns. If it’s urgent, I put it in Today or This Week. Something less urgent will go in Next Week or Soon; the task will eventually move to This Week or Today. Projects I’d like to work on or tech debt I’d like to address will go in Someday. With this method, I don’t lose track of any of my tasks, and I always have something to do in those slow moments when I’m waiting for new features to test.

Strategy Three: Use Quiet Times for Your Deepest Work

One of the best things about working for a remote-friendly company is that my team is spread over four time zones. Since I am in Eastern Time, our daily meetings don’t start until what is late morning for me. This means that the first couple of hours of my workday are quiet ones without interruption. So I use those hours to work on projects that require concentration and focus. My teammates on the West Coast do the opposite, using the end of their workday for their deepest work because the rest of us have already stopped for the day.

Even if you are in the same time zone as your co-workers, you can still carve out some quiet time to get your most challenging work done. Maybe your co-workers take a long lunch while you choose to eat at your desk. Or maybe you are a morning person and get into the office before they do. Take advantage of those quiet times!

Strategy Four: Minimize Interruptions

It should come as no surprise to anyone that we are interrupted with notifications on our phones and laptops several times every hour. Every time one of those notifications comes through, your concentration is broken as you take the time to look at the notification to see if it’s important. But how many of those notifications do you really need? I’ve turned off all notifications but text messages and work-related messages on my phone. Any other notifications, such as Linked In, Facebook, and email, are not important enough to cause me to break my concentration.

On my laptop, I’ve silenced all of my notification sounds but one: the notification that I’m about to have a meeting. I’ve set my Slack notifications to pop up, but they don’t make a sound. That way I have fewer sounds disrupting my concentration.

Another helpful hint is to train your team to send you an entire message all at once, instead of sending messages like:

9:30 Fred: Hi

9:31 Fred: Good Morning

9:31 Fred: I have a quick question

9:33 Fred: Do you know what day our new feature is going to Production?

If you were to receive the messages in the above example, your work would be interrupted FOUR TIMES in the course of four minutes. Instead, ask your co-workers to do this:

9:33 Fred: Good Morning! I have a quick question for you: do you know what day our new feature is going to Production?

In this way, you are only interrupted ONCE. You can answer the question quickly, and then get back to work.

Strategy Five: Set Aside Time for the Big Things

Sometimes projects are so big that they feel daunting. You may have wanted to learn a new test automation platform for a long time, but you never seem to find the time to work on it. While you know that learning the new platform will save you and your colleagues time in the long run, it’s not urgent, and the course you’d like to take will take you ten hours to complete.

Rather than trying to find a day or two to take the course, why not set aside a small amount of time every day to work on it? When I have a course to take, I usually set aside fifteen or twenty minutes at the very beginning of my workday to work on it. Each day I chip away at the coursework to be done, and if I keep at it consistently, I can finish a ten hour course in six to eight weeks. That may seem like a long time, but it’s much better than never starting the course at all!

Strategy Six: Ask For Help

We testers have a sense of personal pride when it comes to the projects we work on. We want to make sure that we are seen as technologically savvy, and not “just a tester”. We take pride in the automation we write. But the fact is that developers usually have more experience working with code than we do, and they might have ideas for better or more efficient ways of doing things.

Recently, I was preparing an example project that I was going to use to teach some new hires how to write unit tests. It was just a simple app that compared integers. I knew exactly how to write the logic, but when I went to compile my program, I ran into a “cannot instantiate class” error. I knew that the cause of the error was probably something tiny, but since I don’t often write apps on my own, I couldn’t remember what the issue was. I had a choice to make at this point- I could save my pride and spend the next two hours figuring out the problem by myself, or I could ask one of my developers to look at it and have him tell me what the problem was in less than ten seconds. The choice was obvious: I asked my developer, and he instantly solved the problem.

However, there is one caveat to this strategy: sometimes we can get into the habit of asking for information that we could easily find ourselves. Before you interrupt a coworker and ask them for information, ask yourself if you could find it through a simple Google search or looking through your company’s wiki. If you can find it yourself faster than it would take to ask your coworker and wait for his or her response, you’ve just saved yourself AND your coworker some time!

Strategy Seven: Take Advantage of Your Energy Levels

I am a morning person; I am the most energetic at the start of my workday. As the day moves along, my energy levels drop. By the end of the work day, it’s hard for me to focus on difficult tasks. Because of this, I organize my work so that I do my more difficult tasks in the morning, and save the afternoon for more repetitive tasks.

Your energy levels might be different; if you think about what times of the day you do your best and worst work, you will be able to figure out when you have the most energy. Plan your most challenging and creative work for those times!

Strategy Eight: Adjust Your Environment

I am very fortunate in that I am able to work remotely. This means I have complete control over the cleanliness, temperature, and sound in my office. You may not be so lucky, but you can still find ways to adjust your environment so that you work more efficiently. If your office is so warm that you find yourself falling asleep at your desk, you can bring in a small fan to cool the air around you. If you are distracted by back and shoulder pain that comes from slumping in your chair, you can install a standing desk and stand for part of your workday. If your coworkers are distracting you with their constant chit-chat, you can buy a pair of noise-cancelling headphones.

Experiment with what works best for you. What works for some people might not be right for you. You might work most efficiently with total silence, with white noise, with ambient music, with classical music, or with heavy metal playing in your ears. You might find that facing your desk so that you can look out the window helps you relax your mind and solve problems, or you might find it so distracting that you are better off facing empty white walls. Whatever your formula, once you’ve found it, make it work for you!

The eight strategies above can make it easier for testers to manage their time and work more efficiently. You may find that these strategies help in other areas of your life as well: paying bills and doing house work, home improvement projects, and so on. If these tips work for you, consider passing them on to others in your life to help them work more efficiently too!