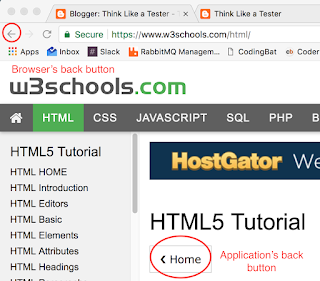

The back button is so ubiquitous that it is easily overlooked when it comes to web and application testing. The first thing to know when testing back buttons is that there are many different types. The two major categories are: those that come natively, and those that are added into the application.

For native buttons, there are those that are embedded in the browser, those that are embedded in a mobile application, and those that are included in the hardware of a mobile device. In the photo above, the back arrow is the back button that comes with the Chrome browser. An Android device generally has a back button at the bottom that can be used with any application. And most iPhone apps have a back button built in at the top of every page.

Added back buttons are used when the designer wants to have more control over the user’s navigation. In the example above, the Home button is used to go back to the W3 Schools home page. (I should mention here that W3 Schools is an awesome way to learn HTML, CSS, Javascript, and much more: https://www.w3schools.com/default.asp)

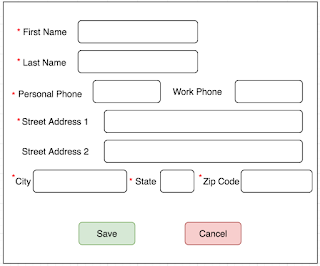

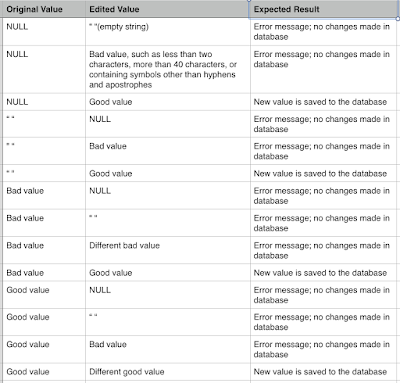

When you are testing websites and applications, it’s important to test the behavior of ALL of your back buttons, even those that your team didn’t add. The first thing to do is to think about where exactly you would like those buttons to go. This seems obvious, but sometimes you do not want your application to go back to just the previous page. An example of this would be when a user is editing their contact information on an Edit screen. When the user is done editing, and has gone to the Summary page, and they click the Back button, they shouldn’t be taken back to the Edit screen, because then they’ll think they will have to edit their information all over again. Instead, they should go back to the page before.

Another thing to consider is how you would like back buttons to behave when the user has logged out of the application. In this case, you don’t want to be able to back into the application and have the user logged in again, because this is a security concern. What if the user was on a public computer? The next user would have access to the previous user’s information.

For mobile device buttons, think about what behavior you would like the button to have when you are in your application. A user will be frustrated if they are expecting the back button to take them elsewhere in your app, and it instead takes them out of the app entirely.

If your application has a number of added back buttons, be sure to follow them all in the largest chain you can create. Look for errors in logic, where the application takes you to someplace you weren’t expecting, or for memory errors caused by saving too many pages in the application’s path.

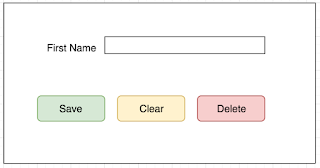

You can also check whether the back button is enabled and disabled at appropriate times. You don’t want your user trying to click on an enabled back button that doesn’t go anywhere!

In summary, whenever you are testing a web page or an application, be sure to make note of all of the back buttons that are available and all of the behaviors those buttons should have. Then test those behaviors and make sure that your users will have a positive and helpful experience.