As you know, this blog has focused for the entire year on logical fallacies. We’ve learned about all kinds of fallacies, from the Red Herring Fallacy to the Appeal to Ignorance Fallacy! It’s time now for the last blog post of the year: the Slippery Slope Fallacy.

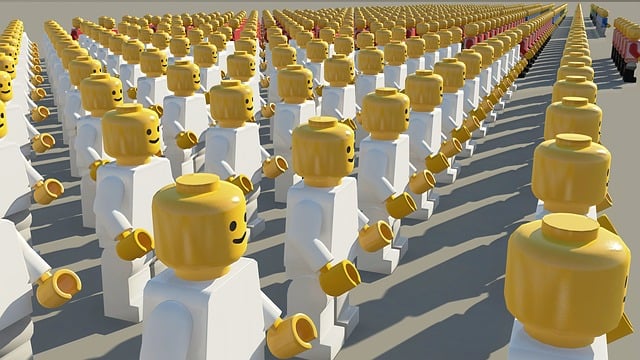

The Slippery Slope Fallacy occurs when someone assumes that one negative event will lead to a chain of negative events, causing disaster, when there’s no proof that each event will be the cause of the next.

This is a common fallacy used by parents when they don’t want to let their teenagers do something. Imagine this scenario between a father and his daughter. “If I let you go to the rock concert and stay out until 2 AM on a school night, soon you’ll be staying out until 2 AM every night. Then you’ll be too tired to get up and go to school on time, which means that your grades will suffer, and then you won’t get into a good college.”

The fallacy is obvious to teenagers: staying out until 2 AM one night will not lead to staying out until 2 AM every night, because the parent won’t actually let that happen. The father in this example is using the Slippery Slope Fallacy as an excuse for why he doesn’t want his daughter to attend the concert.

The Slippery Slope Fallacy happens in software testing as well! You may have encountered a well-meaning tester who has found a small UI bug in the team’s application. They log the bug, but rather than letting it go to the backlog, they insist that the bug be fixed NOW. The logic they use goes something like this: “If we don’t make the developers fix this bug right now, it will mean that they will ignore bigger bugs in the future. Then we’ll wind up with a ton of tech debt that we will never be able to get out of, and our application will be filled with bugs. Our customers will desert us and then we will go out of business.”

The fallacy here might be a bit harder to see for testers who feel strongly that their application should be as close to perfect as possible. But here’s the error: putting one small bug on the backlog will not necessarily result in the team ignoring big bugs. A well-functioning team will have a triage process in place where the whole team can determine the user impact of a bug, how important the fix is compared to other tasks the team is working on, and the potential cost of waiting to fix the bug.

Yes, ignoring too many bugs can result in too much tech debt, but a small UI bug that doesn’t impact the functioning of the application is not going to significantly contribute to that debt. It’s important that a tester choose their battles and let some small bugs slide, because if they protest loudly about every bug, the team will stop taking them seriously.

I hope that you have enjoyed my series on logical fallacies! If you would like to learn about more fallacies, I have great news for you! In early 2024 I will be publishing an mini-book called “Logical Fallacies for Testers”, which will include the twelve fallacies I wrote about this year, plus three additional fallacies!